Dear all,

I am new to the radiation discourse group. I am Francesco and I am looking into using Raspberry to capture HDR images. In particular, I came across the piHDR github folder.

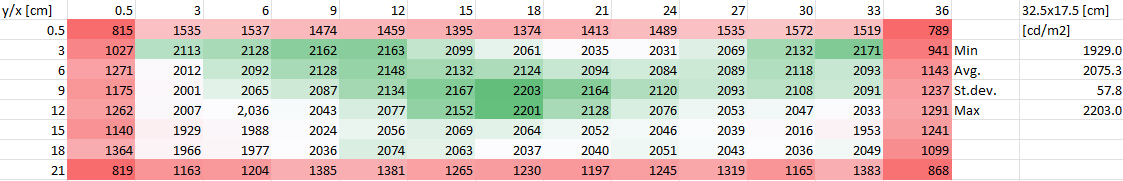

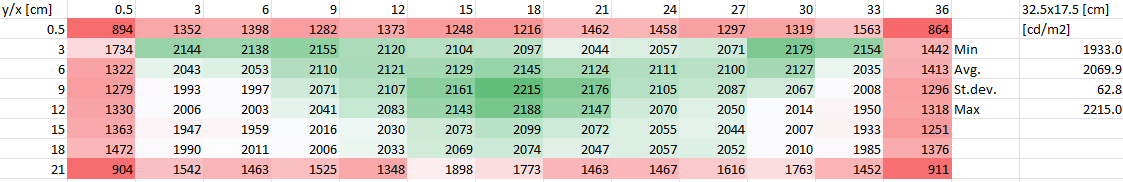

I used an LED panel of which I can change the white color temperature. First, I measured the panel with a Konica Minolta luminance meter, so I know the luminance value across the panel with a mesh of 3x3 cm:

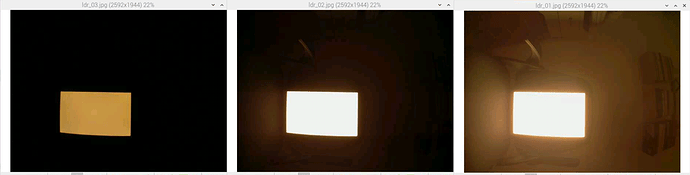

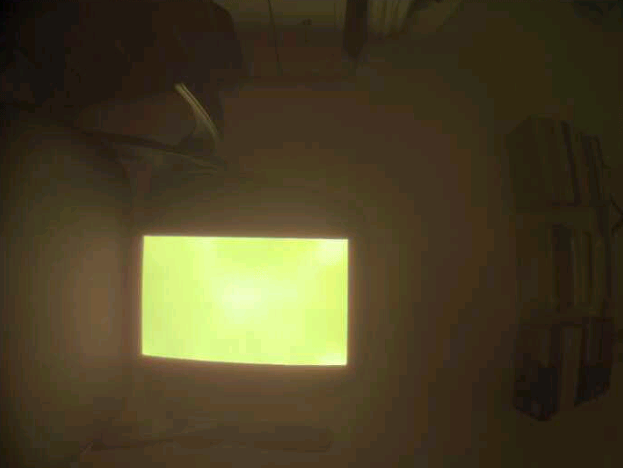

I then positioned the LED panel with the Warm White setting in front of the SainSmart Wide Angle Fish-Eye Camera connected to a RASPBERRY PI 3 MODEL A+, at a distance of one meter in a completely dark room.

I changed the exposurebracket.py in the github piHDR because I want a very fast reaction of the camera with the following code:

camera.framerate = Fraction(1, 2)

camera.iso = 100

camera.exposure_mode = 'off'

camera.awb_mode = 'off'

#camera.awb_gains = (1.8,1.8)

camera.awb_gains = (1.5,1.5)

#camera.brightness = 40 #default is 50

#0.8s exposure

#camera.framerate = 1

#camera.shutter_speed = 800000

#camera.capture('ldr_01.jpg')

#0.2s exposure

camera.framerate = 5

camera.shutter_speed = 200000

#camera.capture('ldr_02.jpg')

camera.capture('ldr_01.jpg')

#0.05s exposure

camera.framerate = 20

camera.shutter_speed = 50000

#camera.capture('ldr_03.jpg')

camera.capture('ldr_02.jpg')

#0.0125s exposure

#camera.framerate = 30

#camera.shutter_speed = 12500

#camera.capture('ldr_04.jpg')

#0.003125s exposure

camera.shutter_speed = 3125

#camera.capture('ldr_05.jpg')

camera.capture('ldr_03.jpg')

#0.0008s exposure

#camera.shutter_speed = 800

#camera.capture('ldr_06.jpg')

I know that this could lead to an inaccuracy in the result. However, the three photos are as follows:

The hdr is then generated using hdrgen, in line with the run_hdrcapture.bsh:

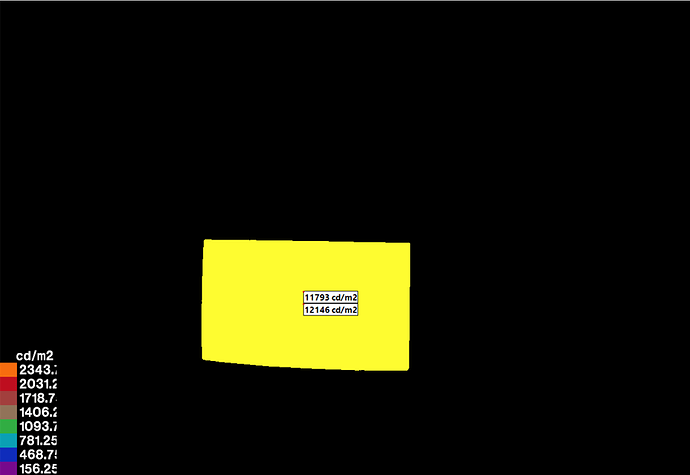

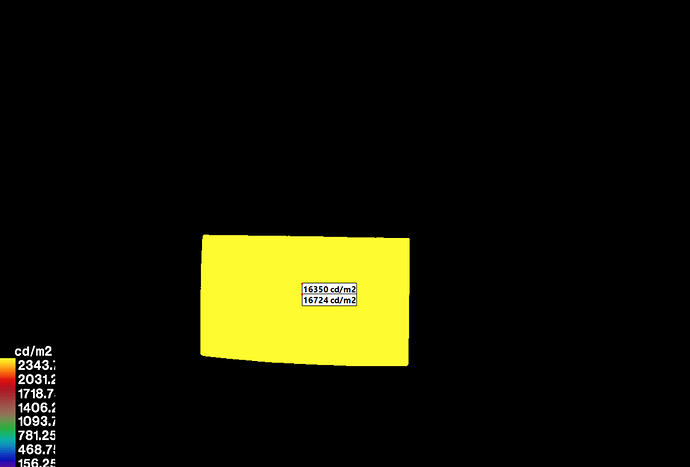

Even though the Raspberry allows me to create the Falsecolor according to the code in run_hdrcapture.bsh, I moved the .hdr file to the Windows PC and used wxFalsecolor.

If I compare the two points with coordinates (18,9) and (18,12) of the previous table with the two point above I found a very high difference.

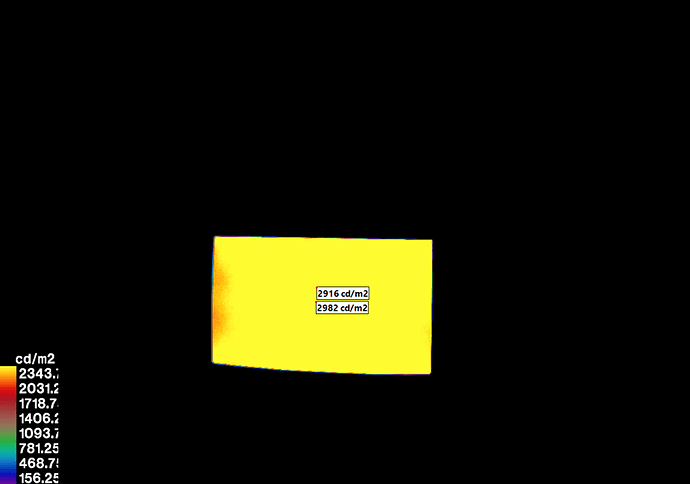

Considering that the function pcomb normally is used to correct the vignetting factor, I thought I could use the same function to apply a uniform correction factor. With the value -s set to 2.4 on a trial basis, I got the following result:

more consistent with the values I measured earlier with the luminance meter.

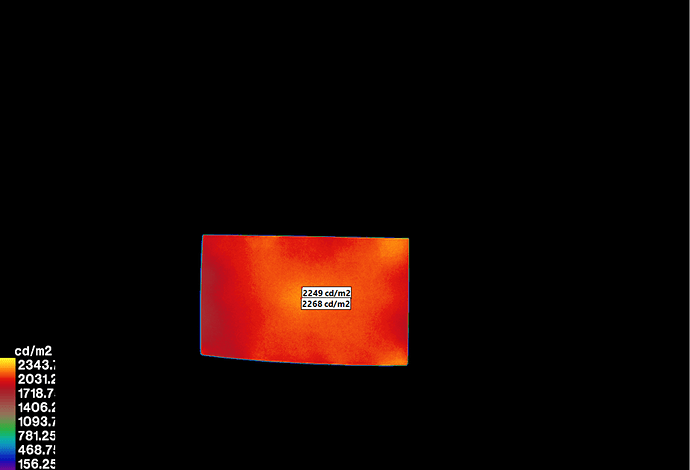

I repeated the steps, setting the colour of the LED to cool white:

- test with the luminance meter:

- generation of the .hdr:

- falsecolor without applying pcomb:

- falsecolor after applying pcomb with same value of -s previously identified:

The difference between 1) and 4) for the two points in this case is obvious: this is not the right approach.

So how can I solve and reduce the differences between the test values measured with the luminance meter and the values derived from the .hdr file recorded with the Pi camera considering different white color temperature of the LED panel?