My friend and fellow lighting software author, Ian Ashdown, gave me the following message for the Radiance community.

Ian is responsible for the creation and development of the AGi32 simulation engine, which is widely regarded in the lighting design community (for good reason). He has suggested that it would be a good idea for one or more persons to undertake a validation of Radiance based on CIE 171:2006, a suite of sample lighting scenes with known numerical solutions. I believe Radiance has been partially validated against this dataset. In particular, Mike Donn posted a request for more information with a couple of references to others' work along these lines in 2011:

http://www.radiance-online.org/pipermail/radiance-general/2011-September/008173.html

(Corrected links from post: <http://www.radiance-online.org/community/workshops/2008-fribourg/Content/Geisler-Moroder/RW2008_DGM_AD.pdf> and <http://www.ibpsa.org/proceedings/BS2011/P_1146.pdf>\)

I don't know of anyone who has gone through the entire CIE 171 test set, but this is what Ian proposes. Note that there are some tests to watch out for, and whoever works on this will probably be talking to me at multiple points. I look forward to learning something new in the conversation.

One way to approach this would be to set up a wiki or plone site where individuals could upload their validation tests and open them up for comments. This would require some public documentation of the CIE 171 tests, and this is where it all gets a bit fuzzy. I don't have this document and if we were to purchase it, I am uncertain as to the legality of sharing (portions of) it.

Ideas and further suggestions are welcome.

Cheers,

-Greg

From: "Ian Ashdown" <[email protected]>

Date: May 6, 2013 7:16:49 PM EDT

This is an academic challenge for the Radiance community.

Validation of lighting design and analysis software has been an issue within

the architectural lighting design community since the first commercial

programs were released in the 1980s. Several studies were performed for

physical spaces, the most notable being:

DiLaura, D. L., D. P. Igoe, P. G. Samara, and A. M. Smith. 1988.

“Verifying the Applicability of Computer Generated Pictures to Lighting

Design,” Journal of the Illuminating Engineering Society 17(1):36–61.

where the authors constructed and measured the proverbial empty rectangular

room while carefully monitoring and controlling photometer calibration,

ambient temperature, luminaire voltage, ballast-lamp photometric factors,

lamp burn-in and other light loss factors, surface reflectances, accurate

photometric data reports, and other issues.

Such studies are useful for interior lighting designs, but considerably less

so for daylight studies where it is nearly impossible to measure, let alone

control, the sky conditions.

They are also mostly pointless for complex spaces with occlusions and

non-diffuse reflective surfaces. Regardless of how carefully the room

parameters might be modeled and controlled, the results only apply to that

particular space.

The alternative is to develop a series of test cases with known analytic

solutions based on radiative flux transfer theory. This became the premise

for:

Maamari, F., M. Fontoynont, and N. Adra. 2006. “Application of the CIE

Test Cases to Assess the Accuracy of Lighting Computer Programs,” Energy and

Buildings 38:869-877.

and:

CIE 171:2006, “Test Cases to Assess the Accuracy of Lighting Computer

Programs.” Wein, Austria: Commission International de l’Eclairage.

At least lighting software companies, DIAL (www.dial.de) and Lighting

Analysts (www.agi32.com), validated their software products with respect to

CIE 171:2006. It does not appear however that anyone has done this for

Radiance.

As a Radiance community member, you may rightly ask, "Is this even

necessary?" Numerous studies have demonstrated the accuracy and reliability

of Radiance, which may rightly claim the title of being the gold standard

for lighting simulation software. (I am saying this as the Senior Research

Scientist for Lighting Analysts, by the way.)

My answer is yes, but not for the reasons you might think.

Lighting Analysts engaged an independent third party called Dau Design &

Consulting (www.dau.ca/ddci ) to perform the CIE 171 validation tests using

AGi32 in 2007. The full report is available here:

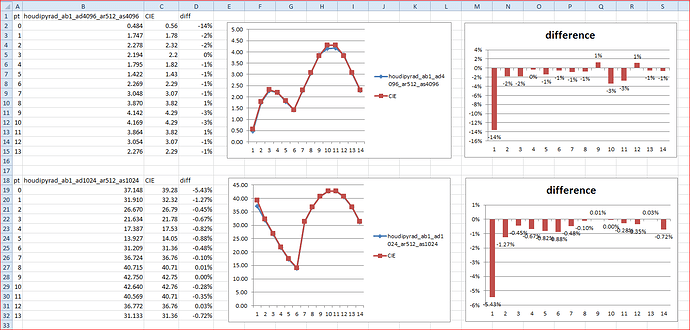

What was interesting about the tests was that AGi32 failed a number of them

on the first pass. As simple as they are, the results were dependent on such

things as the surface meshing parameters and convergence limits (for

radiosity calculations). Roughly equivalent parameters for ray tracing would

be the number of rays and the number of reflections.

It was easy enough to optimize the parameters obtain the best results, which

is what an experienced user would likely do anyhow for complex environments.

Note that I said "experienced user"; that is, someone who has learned by

trial and error what works best for a given software product. CIE 171:2006

has the potential (so far unrealized) of providing beginning users with a

cookbook of sorts to document and choose the best rendering parameters for

various situations. This alone would be valuable, but it becomes even more

useful when the user needs to work with two or more lighting simulation

programs and hopefully obtain similar results.

Validating AGi32 against CIE 171:2006 also led to a few puzzles where no

amount of parameter tweaking produced acceptable results. Further in-depth

analysis proved that the tests themselves were incorrect, being based in

invalid assumptions. These are documented in the report, but sadly CIE

Division 3 has not seen the need to issue an amendment to CIE 171. There are

at least also two other tests (5.13 and 5.14) that have been called into

question.

These issues notwithstanding, CIE 171:2006 is a useful document, which leads

to the academic challenge ...

Validation of Radiance against CIE 171:2006 involves more than just setting

up a few simple models and running the tests. There will undoubtedly be test

cases where the calculated results do not even begin to agree with the

published results. Optimizing the program parameters will demand an in-depth

understanding of what they actually mean and do, and so offer a valuable

learning experience for students doing for example a class project.

Digging deeper, there will likely be other issues with the test cases where

invalid assumptions lead to incorrect published results, subtle or

otherwise. Just because CIE 171:2006 was derived from a PhD thesis does not

mean that it is entirely correct. This, combined with proposals for more

rigorous and comprehensive tests, could form the basis for an interesting

MSc thesis. (The tests are mostly derived from radiative flux transfer

theory and as such are aimed at radiosity-based methods. If these are not

suitable for ray tracing methods, what would be reasonable equivalent

tests?)

Finally, it would alleviate a lot of angst regarding lighting software

validation in the architectural lighting design community if the major

commercial and open source products were validated against CIE 171:2006

(with acknowledgement that there are no overall pass/fail results). Ideally,

each company or software developer would conduct the tests in-house because

they know their products best. The catch here is that they would then be

expected to publicly post both the results and their test models so that

anyone could use their products to confirm the test results. This would

ensure that the test results remain valid as the products undergo continual

improvement.

In terms of Radiance, it could be a community effort, with various people

contributing test models and proposing best parameter settings. In addition

to providing a useful "best practices" guide for Radiance, this would likely

result in Radiance validating CIE 171:2006. It would also be welcomed as a

valuable contribution by the architectural lighting design community.

Ian Ashdown, P. Eng., FIES

President

byHeart Consultants Limited

http://www.helios32.com

···

Begin forwarded message: